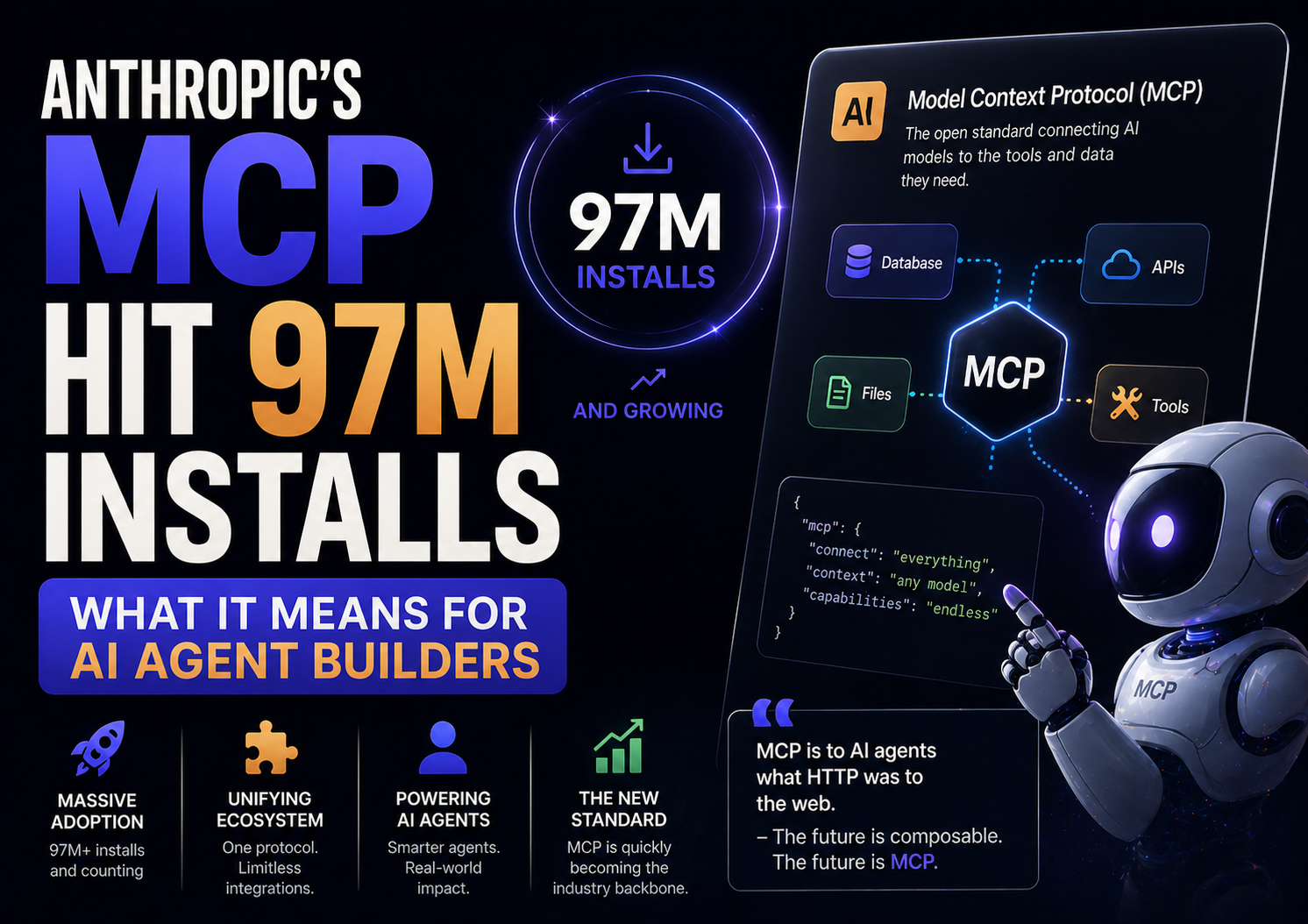

When a protocol crosses 97 million installs, something has shifted. Not “gaining traction” shifted. Infrastructure shifted. The kind where you stop asking “should I use this?” and start asking “can I afford not to?”

That’s where Anthropic’s Model Context Protocol is right now.

MCP crossed 97 million installs in March 2026. Every major AI provider now ships MCP-compatible tooling. The Linux Foundation took it under open governance. If you’re building anything with AI agents in 2026 and you’re not thinking about MCP, you’re building on a foundation that’s already becoming legacy.

What MCP Actually Solves

Before MCP, connecting an AI model to external tools was a custom job every single time. Want your agent to read a Google Doc? Write an integration. Pull from a database? Another integration. Call a Slack API? Another one. Every tool, every model, every team reinventing the same plumbing.

MCP standardizes that layer. It’s a protocol, not a product, which is the important distinction. Think of it like USB-C for AI agents. You build to the standard once, and things connect. The model doesn’t need to know how your database works. Your database doesn’t need to know which model is calling it. MCP sits in between and handles the handshake.

That’s a genuinely useful abstraction. Not glamorous. Not demo-worthy. But the kind of infrastructure that actually matters at scale.

Why 97 Million Installs Is a Signal Worth Taking Seriously

Raw install numbers are easy to dismiss. But the MCP story has specific details that make the 97M figure meaningful.

First, every major AI provider ships MCP-compatible tooling now. That’s not “most” or “several.” Every major one. When competitors adopt a standard that one lab created, that’s a signal the market has decided something.

Second, the Linux Foundation governance move changes the long-term calculus. MCP is no longer an Anthropic bet you’re taking. It’s shared open infrastructure with independent stewardship. For enterprise teams evaluating build vs. buy decisions, that matters a lot. Vendor lock-in risk drops significantly when a neutral foundation governs the spec.

Third, the growth curve is steep. MCP went from experimental to foundational infrastructure in roughly 18 months. That’s fast even by AI standards.

What This Changes for Developers Building Agents

If you’re building AI agents today, MCP changes a few things practically.

Tool integration gets cheaper to maintain. Before MCP, every new tool your agent needed was a custom integration that you owned forever. With MCP, you’re adopting a shared protocol. When the tool’s MCP server updates, your agent benefits without a rewrite on your end.

Multi-model architectures become more viable. One of the annoying realities of building with LLMs is that switching models often means rebuilding integrations. MCP reduces that friction. If your tools speak MCP and your new model speaks MCP, the swap gets simpler. Not free, but simpler.

The agent ecosystem gets denser. More MCP servers means more prebuilt connectors your agents can use. Search, calendar, CRM, databases, communication tools, most of these already have MCP servers in active development. You’re increasingly assembling from parts rather than building from scratch.

Security surface needs more thought. More connections mean more attack surface. MCP standardizes the protocol, not the security model. As agents gain more tool access, the question of what they’re allowed to do and how that’s audited becomes more urgent. This is the part most tutorials skip.

Who Should Be Paying Attention Right Now

Developers building production AI agents. If you’re not already evaluating MCP for your tool integration layer, start now. The ecosystem is mature enough to be useful and still early enough that getting ahead of it has real advantages.

Enterprise IT and security teams. MCP adoption is happening whether or not you’ve approved it. Your developers are already using it. Better to have a governance position now than catch up later when agents have broad system access.

Indie developers and solo builders. The prebuilt MCP server ecosystem is genuinely useful. Search, file access, API connectors, web browsing, a lot of common agent capabilities are already packaged. Worth checking what exists before building something custom.

Teams still on custom integration layers. Not every custom integration needs to be replaced immediately. But new integrations should probably start as MCP-compatible. The migration cost only grows with time.

What People Get Wrong About MCP

The most common misconception is treating MCP as an Anthropic-specific thing. It started there, but the Linux Foundation governance move means it belongs to the ecosystem now. Building on MCP is not betting on Anthropic. It’s betting on the standard, which is a different risk profile.

The second mistake is thinking MCP handles the hard parts of agent design. It handles tool connectivity. It doesn’t handle task planning, error recovery, memory management, or output validation. Those are still your problems. MCP just means you spend less time on plumbing and more time on the actual agent logic.

The third: assuming the current spec is final. MCP is actively evolving. Pin your versions, follow the changelog, and budget time for spec updates. Infrastructure that’s growing this fast will have breaking changes.

External Links Referenced:

- “MCP 97M installs announcement” → https://www.anthropic.com/news

- “Linux Foundation MCP governance” → https://linuxfoundation.org

- “MCP official documentation” → https://modelcontextprotocol.io

FAQ Section:

Q: What is Model Context Protocol (MCP)?

A: MCP is an open standard that lets AI models connect to external tools, APIs, and data sources in a consistent way. Think of it as a shared language for AI agents to talk to the rest of your software stack.

Q: Who created MCP?

A: Anthropic created and open-sourced MCP. The Linux Foundation now governs it, making it neutral open infrastructure rather than a vendor-specific standard.

Q: Why does 97 million installs matter?

A: It signals MCP has crossed from “interesting experiment” to foundational infrastructure. Every major AI provider now supports it, which means the ecosystem around it is growing fast and the risk of betting on it has dropped significantly.

Q: Do I need MCP to build AI agents?

A: No, you can still build custom integrations. But MCP reduces the long-term maintenance cost of tool connectivity and makes multi-model architectures more practical. New projects should seriously evaluate it as a starting point.

Q: Is MCP only for Anthropic’s Claude?

A: No. Every major AI provider now ships MCP-compatible tooling. It’s model-agnostic by design.

Q: What are the security risks of MCP?

A: Broader tool access means broader attack surface. MCP standardizes connectivity, not security. Teams adopting it need explicit policies around what agents can access, what actions they can take, and how that’s audited.

Q: Where do I find existing MCP servers?

A: The official MCP repository on GitHub has a growing directory. Most major tools like Google Drive, Slack, and various databases already have community or official MCP servers available.

Q: Is MCP stable enough for production use?

A: Yes, with caveats. The core spec is stable. It’s still actively evolving, so pin your versions and monitor the changelog. Large enterprises should have a migration plan for spec updates.