Stanford’s AI Index drops every April and it’s the one report I actually read rather than skim the headline summary and move on. It’s independently produced, densely sourced, and doesn’t have a lab with billions on the line writing the conclusions.

The 2026 edition runs over 400 pages. I went through it so you don’t have to. Here are the findings that actually changed how I think about where AI is right now.

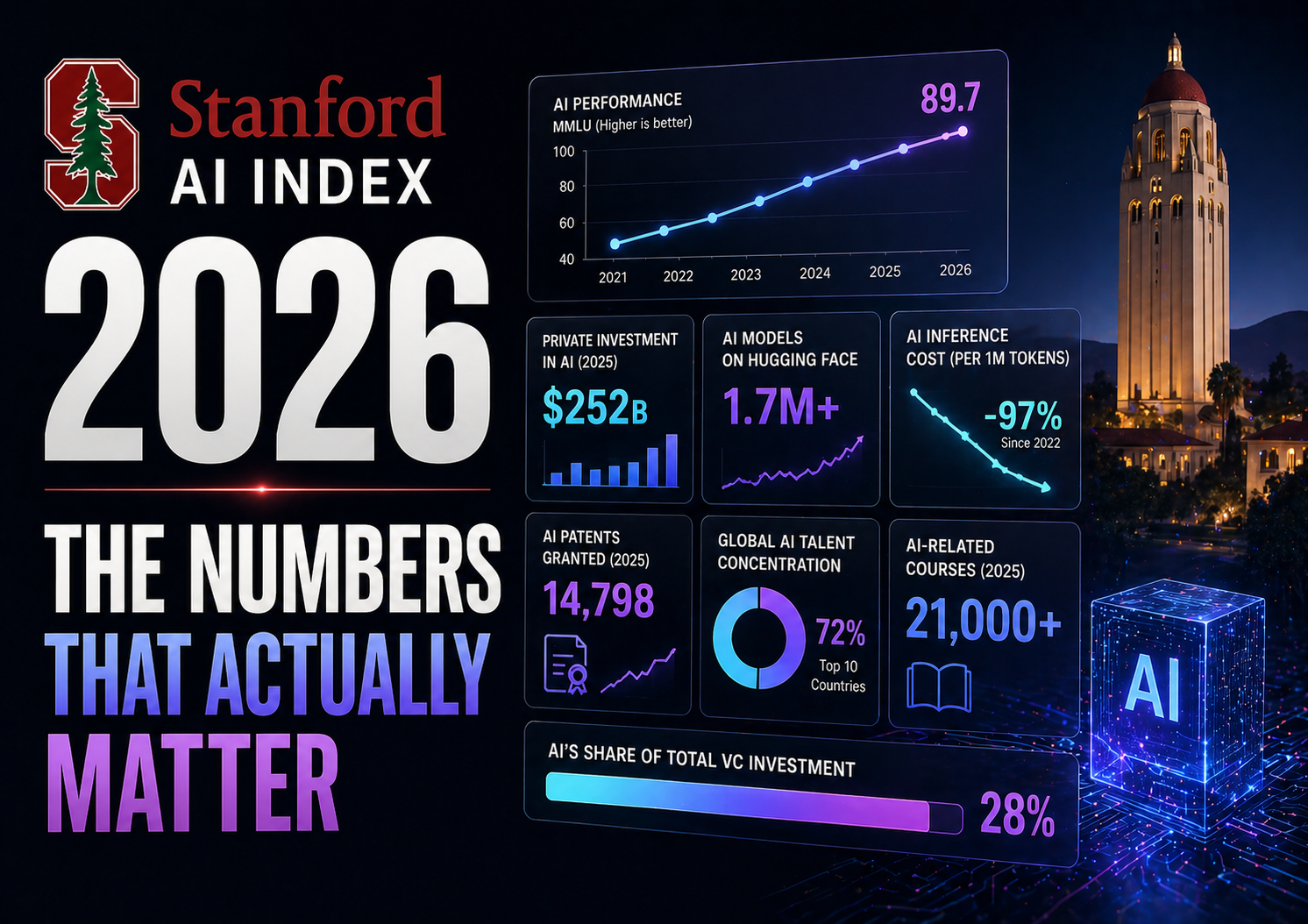

AI Progress Is Not Slowing Down

This one matters because a vocal group of skeptics spent most of 2025 arguing that model capabilities had plateaued. The data doesn’t support that.

On SWE-bench Verified, a benchmark where models resolve real GitHub issues, scores climbed from 60% to nearly 100% of the human baseline in a single year. Frontier models now meet or exceed human performance on PhD-level science questions, multimodal reasoning, and competition mathematics. On Humanity’s Last Exam, a benchmark designed by subject-matter experts to represent the hardest problems in their fields, the top model scored 8.8% in early 2025. By April 2026, Claude Opus 4.6 and Gemini 3.1 Pro are both topping 50%.

That’s not a plateau. That’s a steep curve that shows no sign of flattening yet.

The US-China Gap Is Basically Gone

As of March 2026, the top US model leads China’s best by just 2.7% on performance rankings. US and Chinese models have traded the top spot multiple times since early 2025. DeepSeek-R1 briefly matched the leading US model in February 2025.

The US still produces more top-tier models (50 notable releases in 2025 vs China’s 30) and leads in high-impact patents and private investment. But China leads in total publication volume, citations, total patent output, and industrial robot installations. And China’s government has deployed an estimated $184 billion in state-backed investment into AI firms since 2000, which means private investment comparisons significantly understate China’s total AI spend.

The days of treating frontier AI as a US-exclusive capability are over.

Adoption Is Moving Faster Than Any Technology in History

Generative AI hit 53% population adoption within three years of mainstream availability. For context, the personal computer took over a decade to reach comparable penetration. The internet took longer.

Organizational adoption reached 88% of surveyed companies. Four out of five US university students now use AI for coursework. The consumer value of generative AI tools in the US reached $172 billion annually by early 2026, up from $112 billion a year earlier. The median value per user tripled over that same period. Most of these tools are still free or close to it.

That last point is worth sitting with. People are getting $172 billion in annual value from tools that largely cost them nothing. That’s an unusual economic situation with implications nobody has fully worked through yet.

The Most Capable Models Are the Least Transparent

This is the finding that concerns me most and gets the least coverage.

The Foundation Model Transparency Index tracks how openly AI companies disclose training data, compute requirements, capabilities, risks, and usage policies. Average scores dropped from 58 to 40 out of 100 this year. The report notes directly that the most capable models now tend to disclose the least information.

As frontier AI becomes more consequential, the companies building it are becoming less transparent about how it works. That’s a trend moving in the wrong direction at exactly the wrong time.

Investment Numbers That Are Hard to Comprehend

Global corporate AI investment reached $581.69 billion in 2025, a 129.9% increase year over year. Private investment alone grew 127.5% to $344.7 billion. Generative AI accounted for nearly half of all private AI funding, growing over 200% from 2024.

A few specific milestones from 2025: OpenAI raised $40 billion at a $300 billion valuation. Nvidia became the first public company worth $4 trillion. Anthropic raised $13 billion at a $183 billion valuation. Google reported over $150 billion in annual capital expenditure.

The number of newly funded AI companies rose 71%. Billion-dollar funding events nearly doubled from 15 to 28 in a single year.

This level of capital concentration into a single technology sector is historically unusual. Whether it produces returns proportional to the investment is the open question hanging over the entire industry.

Education Is Completely Unprepared

80% of US high school and college students use AI for school-related tasks. Only half of middle and high schools have any AI policy in place. Just 6% of teachers say those policies are actually clear.

That gap between usage and governance is already producing problems. The report notes that AI engineering skills are growing fastest not in the US but in the UAE, Chile, and South Africa, which suggests the talent landscape is shifting in ways that don’t match current assumptions about where AI capability will concentrate.

The Jagged Frontier Problem

One of the most useful concepts in the report is what researchers call the “jagged frontier.” Models can now solve extraordinarily complex problems while still failing at surprisingly simple ones. A model that passes a PhD-level chemistry exam might stumble on basic spatial reasoning or a straightforward counting task.

This matters practically because benchmark scores don’t tell you where a model will fail in your specific workflow. The report is direct about this: knowing that a legal reasoning benchmark scores 75% accuracy tells you very little about how well the model would actually function inside a law firm’s day-to-day operations. Real-world testing in your specific context still can’t be replaced by benchmark comparisons.

What This Actually Means for AI Tool Users

Three things stand out as practical takeaways from this report.

First, the capability gains are real and accelerating. If you tried an AI tool for a specific task six months ago and it wasn’t good enough, try it again. The benchmarks suggest meaningful improvement in that timeframe.

Second, the transparency decline means you should be cautious about over-relying on any single closed model for high-stakes decisions. The less you know about how a system works, the harder it is to understand when and why it will fail.

Third, the adoption curve means the competitive gap between organizations using AI well and those still evaluating it is widening every month. The median user value tripling in a year is not a statistic about early adopters anymore. It’s about the mainstream.

FAQ Section:

Q: What is the Stanford AI Index?

A: It’s an annual report from Stanford University’s Human-Centered AI Institute that independently tracks AI progress across technical capabilities, investment, adoption, workforce impact, and public perception. It’s widely cited by governments, researchers, and media because it has no financial stake in the findings.

Q: Is AI progress actually slowing down in 2026?

A: The data says no. Coding benchmarks jumped from 60% to nearly 100% of human baseline in a single year. Top models now exceed human performance on PhD-level science questions. The capability curve is still steep.

Q: How fast has AI adoption actually been compared to other technologies?

A: Generative AI hit 53% population adoption in three years. The personal computer and the internet both took significantly longer to reach comparable penetration. It’s the fastest mainstream technology adoption on record.

Q: Is the US still ahead of China in AI?

A: Only marginally on model performance, with a 2.7% gap as of March 2026. The US leads in private investment and high-impact patents. China leads in publications, total patents, and industrial robots. China’s state investment also likely understates their true total spend.

Q: Why are the most capable AI models becoming less transparent?

A: The report doesn’t give a definitive answer but the pattern is clear: as models become more commercially valuable, companies are sharing less about how they work. Transparency scores dropped from 58 to 40 out of 100 this year.

Q: What is the “jagged frontier” in AI?

A: The idea that AI models can solve very hard problems while failing at surprisingly simple ones. High benchmark scores don’t guarantee reliable performance in your specific real-world use case.

Q: How much are people actually getting from free AI tools?

A: The estimated US consumer surplus from generative AI reached $172 billion annually by early 2026, with median value per user tripling in a single year. Most of these tools are still free or very low cost.

Q: Where can I read the full Stanford AI Index 2026?

A: It’s free at hai.stanford.edu. The full report is over 400 pages but the executive summary covers the key findings in a manageable format.

External Links Referenced:

- Stanford HAI 2026 AI Index full report → hai.stanford.edu/ai-index/2026-ai-index-report

- Foundation Model Transparency Index → crfm.stanford.edu